Webinar

Visualizing Sensor and Prototyping ADAS/AD Algorithms (Forward Collision Warning example)

17 Sep 2020 | 15:00-16:00 (GMT+8)

Online via Webex

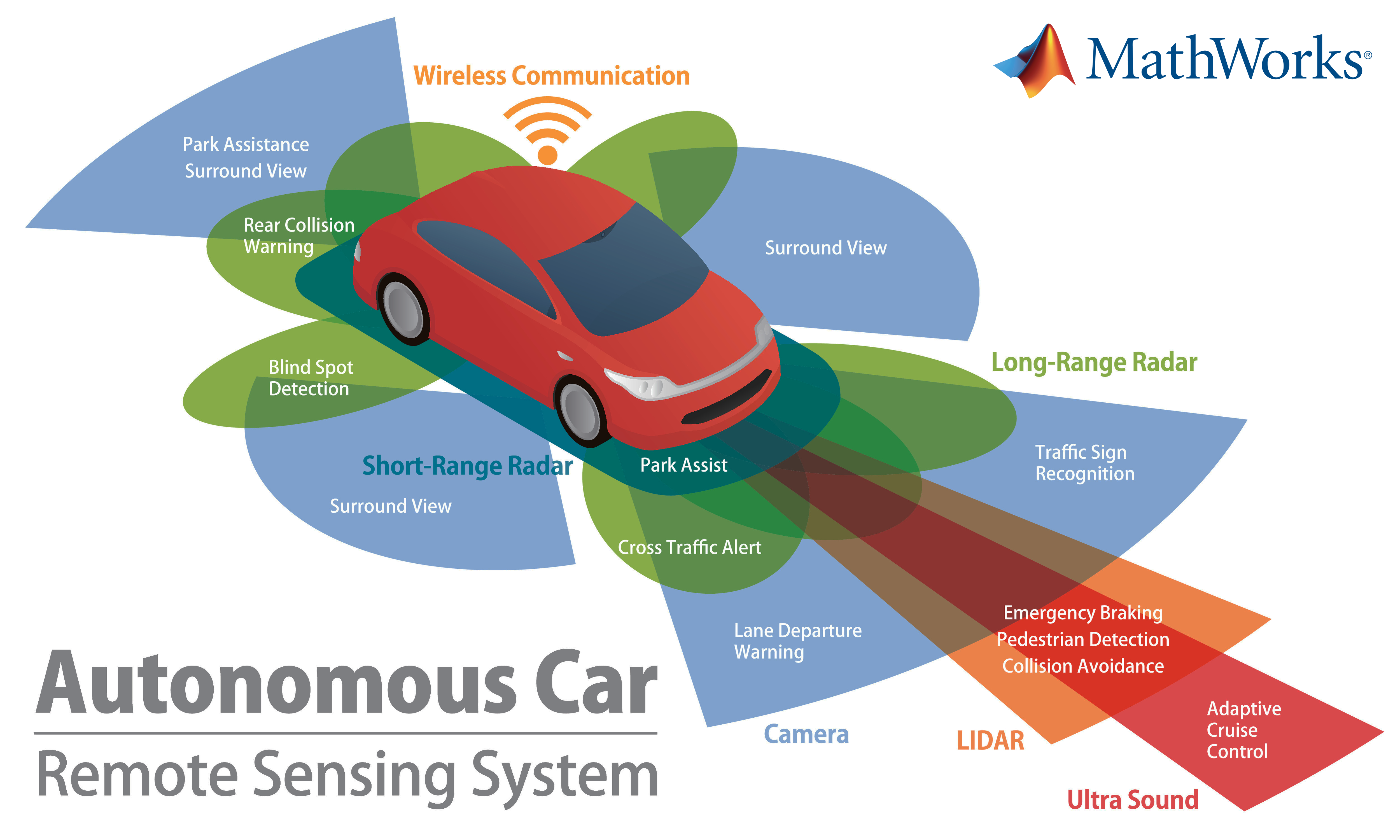

More Autonomy = More Sensors = More Raw Data to Visualize and Process

Full Autonomy requires more Sensors and more raw Data to process.

Several sensor types are available to AV manufacturers, with radar, LiDAR, camera, audio, and thermal being the common options. As you’d expect, each sensor offers its own unique strengths and weaknesses, as well as applicability for certain autonomous functions.